According to McKinsey’s research, GenAI can add up to $4.4 trillion to the global economy.

Naturally, business leaders and founders want to take advantage of this, but they are also realizing that GenAI opportunities come with big risks.

In McKinsey’s survey of companies earning over $50 million annually, 63 percent said GenAI is a high or very high priority. Yet around 91% admitted they are not prepared to use it responsibly.

This implies that while companies are rapidly embracing AI, they are often unprepared to handle the associated risks. This gap is exactly why AI governance is becoming a board-level risk in 2026.

To manage AI risk effectively, CIOs and risk leaders first need to understand how it actually spreads inside an organization.

Table of Contents:

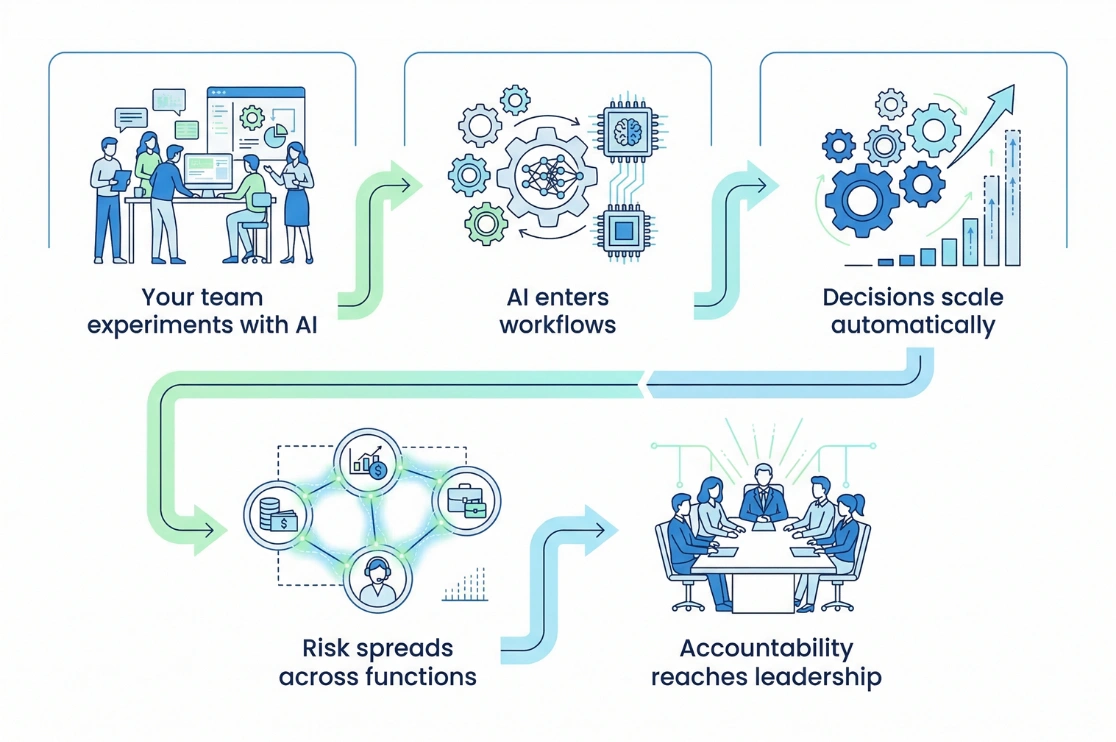

How AI Risk Actually Spreads Inside an Organization?

Most business leaders think that AI risk is associated with IT or data teams. In fact, it starts out in everyday business use cases.

For example:

-

HR uses AI to screen candidates

-

Finance uses AI to make predictions

-

Marketing using AI for customer personalization

Every small use case adds a new layer of AI risk, because AI adoption is decentralized throughout the organization.

What typically happens is:

Teams experiment with AI, business tools add built-in AI, and some employees also use external AI tools, which leads to the rise of shadow AI.

One wrong output from AI influences thousands of customer interactions and high-volume operational workflows.

This is the point where AI risk turns into business risk.

Many enterprises also rely on third-party vendors for AI integration without full visibility, leading to blind spots like:

-

Unknown training data sources

-

Cross-border compliance risks

-

Limited model transparency, etc.

This is exactly why,

It’s rare for AI risk to show up as a single failure.

It grows across tools, teams, and decisions.

By the time it becomes visible to business leaders, it is already:

-

Cross-functional

-

Hard to fix

-

Difficult to control

This is why understanding how AI risk spreads is the first step toward governing it effectively.

Top 5 Key Risks Associated with AI

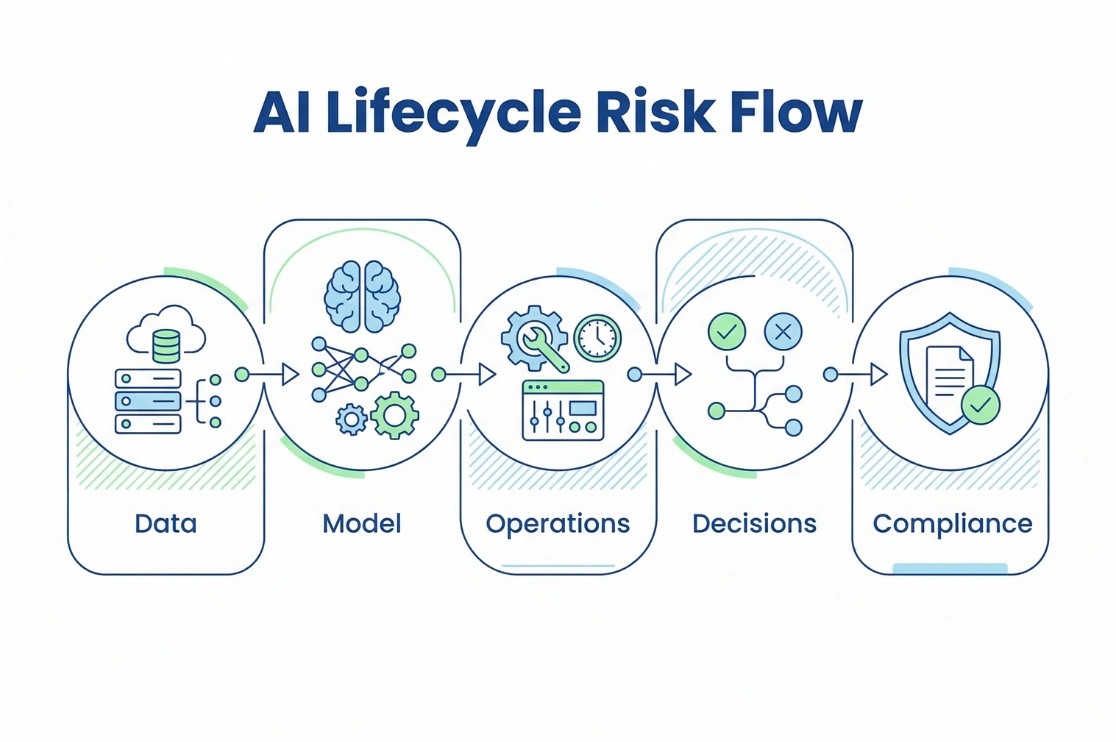

To manage AI risk effectively, leaders must understand where it actually shows up across the AI lifecycle.

1. Data Risks

Bad data leads to flawed AI outcomes at scale

Leading to:

-

Biased or incomplete training data

-

Data privacy and security vulnerabilities

-

Poor data lineage and traceability

2. Model Risks

Complex AI models create trust and accountability gaps

Leading to:

-

Lack of explainability

-

Model theft or IP leakage

-

Adversarial attacks and manipulation

3. Operational Risks

Deployment of AI systems introduces operational challenges

Such as:

-

Model drift over time

-

Integration failures with legacy systems

-

Lack of monitoring and governance

4. Decision Risks

When AI take good/bad decisions on its own

It can lead to:

-

Limited human oversight

-

High-volume AI-led decisions

-

Automated wrong approvals or rejections

5. Ethical and Legal Risks

When AI systems don’t adhere to ethical standards and regulatory requirements

It can lead to:

-

Regulatory non-compliance

-

Privacy violations

-

Biased outcomes

-

Reputational damage

Why Enterprise AI Risk Consulting is Essential?

A few years ago, risk management strategies used to be static because IT systems were static.

Today, AI systems are not static. They are layered, cross-functional, and constantly evolving.

They learn, adapt, integrate with new tools, and influence decisions in real time.

Hence, outdated risk management strategies and governance rules often fail for AI initiatives.

This is where enterprise AI risk consulting becomes essential.

Wondering why?

Let’s understand with the help of some use cases:

1. Visibility Across the Entire AI Footprint

The majority of business leaders do not know:

-

Where AI is already embedded

-

Which vendors are using AI on their behalf

-

Which business decisions are AI-influenced

Without enterprise-wide visibility, risk remains fragmented.

Enterprise AI risk consulting helps leaders map their full AI exposure across data, models, workflows, and third-party tools.

2. Decision-Level Accountability

Most companies overlook the fact that AI risk is not just about data or models. It is also about making decisions.

Who is accountable when AI:

-

Rejects a company loan

-

Screens out a candidate

-

Flags a customer transaction

Enterprise AI risk consulting introduces AI decision governance among systems. This ensures that high-impact AI decisions have clear oversight and auditability.

3. AI Regulatory Readiness

AI regulatory readiness is one of the biggest drivers of AI governance today. A strong example of this is the EU Artificial Intelligence Act (EU AI Act).

The EU AI Act focuses on regulating AI systems based on the level of risk they create.

Higher-risk systems face stricter requirements, especially those used in sensitive industries like hiring, finance, healthcare, and public services.

It introduces:

-

Clear rules for deploying and using AI systems

-

Mandatory obligations for high-risk AI applications

-

Prohibited use cases for harmful AI practices

-

Stronger oversight and governance expectations

This means that companies operating globally can no longer treat AI regulatory readiness as an option.

Instead, companies should leverage enterprise AI risk consulting to cultivate true AI regulatory readiness by:

-

Mapping their AI systems to risk tiers

-

Preparing for audits and regulatory scrutiny

-

Aligning AI governance with global standards

4. Continuous Monitoring, Not One-Time Controls

AI risk does not stop at deployment.

Models evolve. Data changes. Use cases expand.

Enterprise AI risk consulting establishes ongoing monitoring frameworks so governance evolves alongside your AI systems.

Best Practices to Implement AI Compliance Strategy in 2026

Once you have aligned with the best enterprise AI risk consulting service provider, the next step is to design an effective AI compliance strategy.

An AI compliance strategy defines the measures taken to ensure that your AI-driven systems fully adhere to all legal rules and regulations, not only in formal documentation but also through practical, operational implementation.

Below are some best practices for implementing an AI compliance strategy:

1. Build an AI Inventory

You cannot govern what you cannot see. So, start by knowing where AI exists across the enterprise.

-

Identify internal and third-party AI systems

-

Track AI-enabled vendors and tools

-

Document use cases by risk level

2. Classify AI by Risk Tier

Not all AI initiatives carry the same exposure, so:

-

Separate low, medium, and high-risk use cases

-

Apply stricter governance to decision-critical AI

-

Align classification with global regulations

3. Establish Decision Accountability

Move beyond model governance into decision governance.

-

Define human oversight levels

-

Assign ownership for AI-driven outcomes

-

Create escalation paths for failures

4. Introduce Continuous Monitoring

AI risk evolves after deployment. Therefore, a future-ready AI compliance strategy is continuous, not static:

-

Monitor model drift and output quality

-

Audit AI decisions periodically

-

Track regulatory changes

5. Align Compliance With Business Velocity

Avoid governance that slows innovation.

-

Embed controls into workflows

-

Use lightweight but enforceable policies

-

Balance speed with oversight

Recommended Reading:

Why Leaders Choose BluEnt for Enterprise AI Risk Consulting?

Smart risk leaders do understand that AI risk management is not just about creating rules. It is strategic, regulatory, and deeply interlinked between teams.

This is why forward-looking enterprises are investing in enterprise AI risk consulting services.

At Bluent we work with enterprises to evaluate their existing data governance strategies, uncover hidden risk layers, and design practical pathways toward controlled and compliant AI adoption.

If your organization is scaling AI but lacks full visibility into its risks, it may be time for an objective reassessment.

Data Privacy and Governance: Managing Sensitive Data Effectively

Data Privacy and Governance: Managing Sensitive Data Effectively  Master Data Management (MDM) as a Governance Enabler

Master Data Management (MDM) as a Governance Enabler  Operationalizing Data Governance Strategy Beyond Policies

Operationalizing Data Governance Strategy Beyond Policies  Establishing a Data Governance Council: Best Practices

Establishing a Data Governance Council: Best Practices