Generative AI is quickly gaining acceptance by enterprises in the United States to enhance productivity, customer experience, and innovation. Nonetheless, with increased adoption, security, compliance and risk concerns are rising.

For security leaders, risk officers, and AI leaders, the challenge is clear: how to scale AI safely without slowing innovation. This is where AI governance consulting services are very important.

The US organizations need to strike a balance between control and speed. Generative AI poses a threat to data privacy, intellectual property, bias, and regulation. Meanwhile, companies cannot afford to wait long before implementing AI-based solutions.

Table of Contents:

The market size in the Generative AI market is projected to reach US$91.57bn in 2026. A strong governance and security framework would ensure responsible adoption of AI and flexibility in agility and competitive advantage.

Why Generative AI Security Is a Board-Level Priority in the USA

In the US, boards and executive leaders are pushing to have stricter supervision of AI programs. There are growing regulatory demands, cyber threats and ethical issues.

Generative AI systems can easily be exposed to sensitive data and thus it is necessary to be provided with security and accountability.

A structured approach to AI risk management consulting enables organizations to:

-

Secure intellectual property and sensitive data.

-

Adhere to privacy and regulatory criteria.

-

Enhance accountability and transparency in AI decisions.

-

Reduce operational and reputational risks

-

Develop customer and regulatory trust.

-

Empower the responsible and scalable AI innovation.

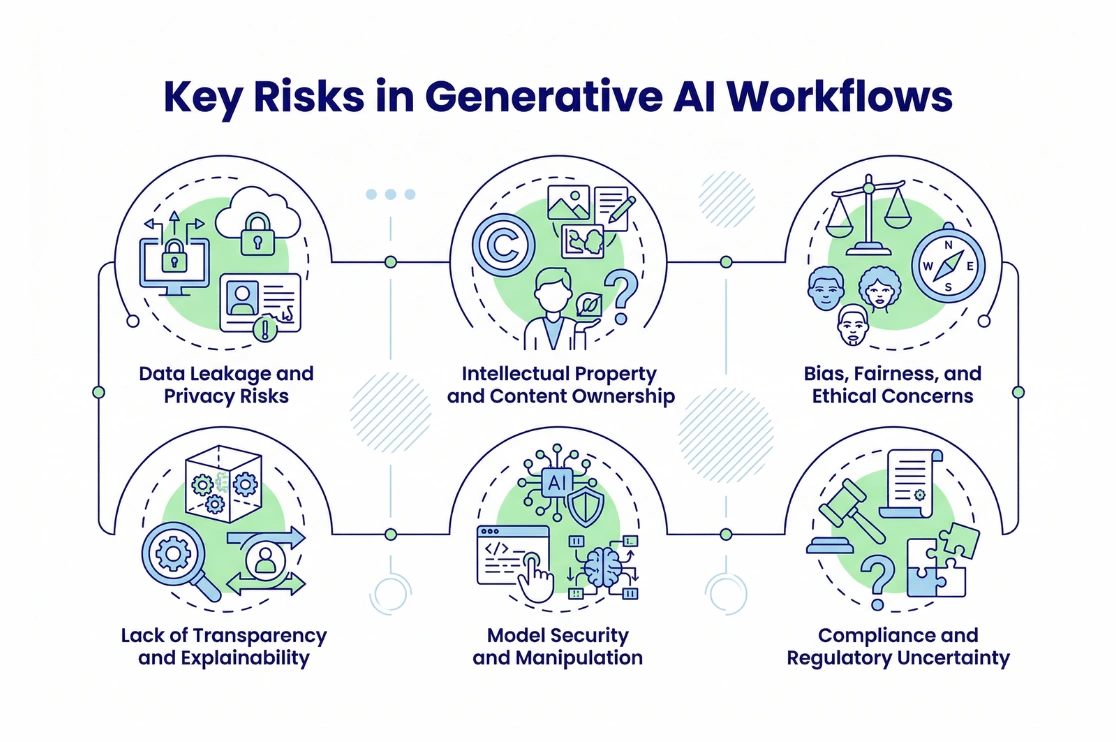

Key Risks in Generative AI Workflows

Generative AI’s overall impact on the global economy could be as large as $15.7 trillion by 2030. Most companies embrace AI within a short time and did not consider governance and security. This creates significant exposure across the AI lifecycle.

Data Leakage and Privacy Risks

Generative AI systems tend to work with a lot of sensitive data. Without the strong controls, confidential information may be leaked or misused. This increases the legal and regulatory risks.

Intellectual Property and Content Ownership

The AI-generated outputs can cast doubts on property rights, licensing, and copyright. The compliance and protection of assets in organizations should be provided.

Bias, Fairness, and Ethical Concerns

One of the negative outcomes of AI usage is discrimination caused by biases in AI models. It may lead to reputational losses and regulation.

Lack of Transparency and Explainability

A large number of AI systems are black boxes. This results in mistrust and lack of justification for the decisions.

Model Security and Manipulation

The threat actors are keen on exploiting vulnerabilities, altering outputs, or poisoning data. This could interrupt business and create lack of faith.

Compliance and Regulatory Uncertainty

The US organizations are forced to work through the changing AI regulations and industry standards. Absence of governance exposes oneself to legal and operational risks.

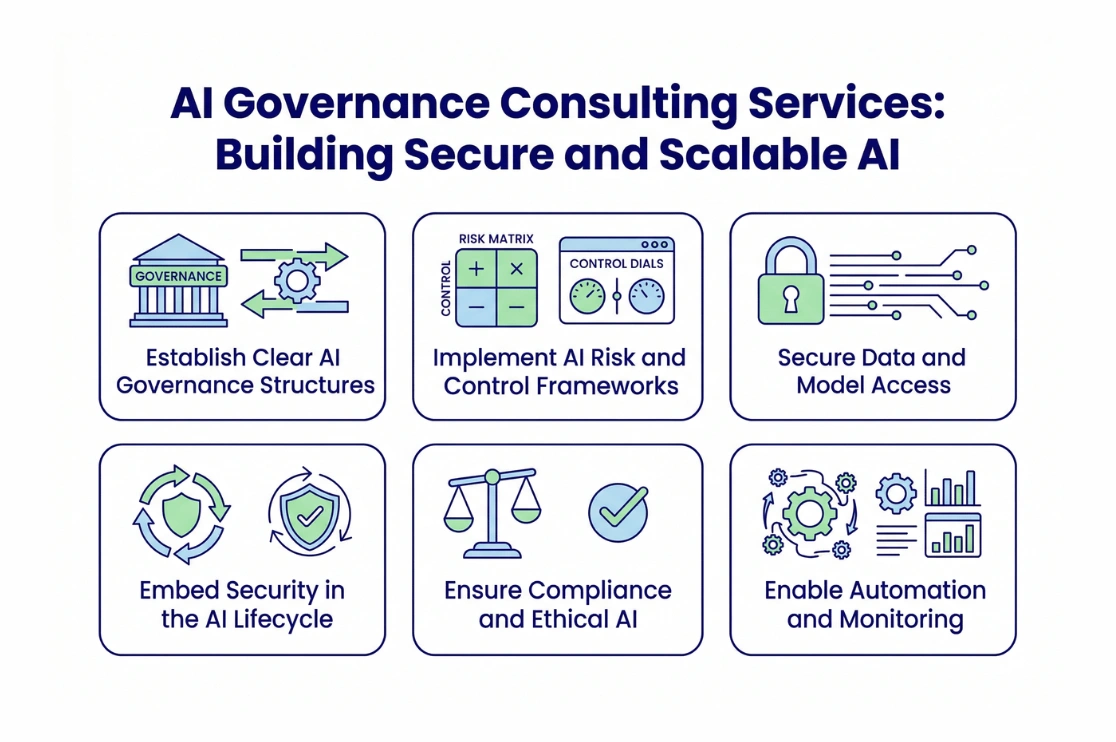

AI Governance Consulting Services: Building Secure and Scalable AI

A good governance framework helps companies to ensure the AI processes do not slacken innovation. This shall be aimed at integrating security and accountability across the lifecycle.

Establish Clear AI Governance Structures

Organizations need to specify AI initiatives ownership, responsibility, and control. The collaboration between governance committees, risk teams, and business leaders must be established to align AI with the strategic objectives.

Roles can be used to enhance transparency and responsible decision-making.

Implement AI Risk and Control Frameworks

The businesses are expected to establish model development, deployment, and monitoring policies and controls that are organized. This will offer consistency in the management of the organization.

Secure Data and Model Access

There are high chances of identity and access management that reduces the risk of unauthorized access. Data pipelines and model environments are used to ensure the protection of sensitive information.

The practice is in line with the advanced generative AI security services and enterprise security practices.

Embed Security in the AI Lifecycle

Security should be integrated from design to deployment. Constant monitoring, validation and auditing assists in the detection of risks as they occur.

Ensure Compliance and Ethical AI

The fact that organizations should align AI initiatives with the legal, regulatory, and ethical standards is important. This comes in the form of privacy, transparency and fairness.

Enable Automation and Monitoring

The scalability and efficiency are enhanced through automated governance and monitoring. Improved response to risks is provided through real-time visibility.

Recommended Reading:

How Data Governance Strengthens AI Security

Secure AI is based on strong data governance. AI models lack reliability to provide trusted and controlled results without trusted data.

An integrated strategy of AI governance consulting services with enterprise data governance will ensure:

-

Trusted and high-quality training data

-

Better transparency and auditing

-

Better compliance and risk management

-

Faster scaling of AI initiatives

Why Do US Enterprises Choose BluEnt?

BluEnt assists the US organizations in designing safe, scalable, and responsible AI environments. It is aimed at a balance between innovation, risk management, and compliance.

BluEnt offers:

-

Ensure generative AI processes

-

Data governance and lineage

-

Compliance and ethical AI

-

Continuous monitoring and assurance

Conclusion

In the US, businesses need to obtain generative AI workflows without slowing down innovation. Governance, security, and accountability are also essential with the increase in adoption. Companies that invest in AI governance consulting services enjoy a competitive advantage due to the minimization of risks, enhancement of trust, and the ability to innovate responsibly.

An active strategy can assist companies to scale AI safely and comply with the rules and ethical demands. It similarly enhances resiliency in the swiftly changing digital environment.

Request an AI Governance & Risk Review to start your journey.

Frequently Asked Qwestion (FAQs)

What are AI governance consulting services?AI governance consulting services assists an organization in creating a structure to address AI risks, guarantee compliance, and permit safe, responsible, and scalable AI adoption.

Why is generative AI security important in the USA?It protects sensitive data, ensures regulatory compliance, minimization of risks, and development of trust in AI-based decisions and enterprise innovation.

How do enterprises manage AI risks effectively?To mitigate risks and achieve stable and trustworthy AI operation, they enforce governance designs, structures, surveillance, access controls, and ethical principles.

How do AI governance frameworks support innovation?They offer transparent policies, risk management, and responsibility, allowing to deploy AI solutions much faster without losing security, compliance, and business trust.

How can enterprises secure generative AI workflows?They implement powerful governance, access controls, data protection, monitoring, and compliance frameworks to achieve safe, scalable, and trustworthy operations of AI.

Data Privacy and Governance: Managing Sensitive Data Effectively

Data Privacy and Governance: Managing Sensitive Data Effectively  Master Data Management (MDM) as a Governance Enabler

Master Data Management (MDM) as a Governance Enabler  Operationalizing Data Governance Strategy Beyond Policies

Operationalizing Data Governance Strategy Beyond Policies  Establishing a Data Governance Council: Best Practices

Establishing a Data Governance Council: Best Practices